Constraints on Data-driven Systems in Public Education

Why haven’t public school systems been able to better use data-driven decision making to improve outcomes for their students?

A typical public school classroom is run in 2017 similarly to how it was run in 1917. Many public schools still have a hard time achieving basic graduation rates, and it eludes them to diagnose what methods work and which don’t. But school systems have tons of great longitudinal data. They’ve used it to compare student performance, set exam benchmarks, allocate school resources and hold administrators accountable to targets. Yet, our public schools tend to be far behind in fully adopting analytics and data-driven systems at a micro level to improve teaching and learning outcomes.

The Minneapolis Public Schools (MPS) for example set 3-year strategic goals in 2008 but by 2011 had to extend the timeline on these goals to 2014 due to lack of conclusive results or improvements in student performance. Academic success seems to evade some schools, and even when improvements are made, it’s hard to pin point what exactly drove them. Why have public education systems like the MPS largely missed the technological advancements in data analytics even when they’ve tried?

There are at least 3 key constraints to the effective use of data-driven teaching.

1) It’s difficult to define the variables.

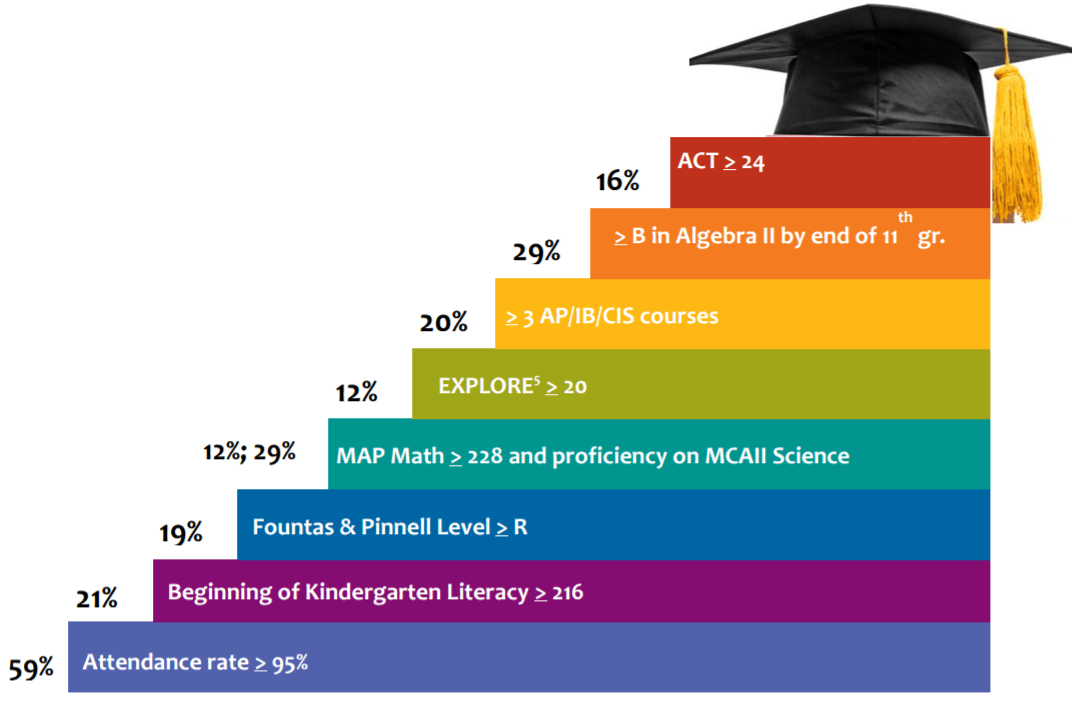

It’s hard to operationally define long-term academic success of a child. First, one defines the core competencies a student needs at various schooling stages (e.g. reading comprehension, logic, analytical skills, creativity). Each competency must then be translated to a measurable variable (e.g. a test score). These variables must then be fit into a model that determines a successful outcome (call it, entry into college). Consider the below from MPS’s “Steps to Student Success” project.

The MPS identified metrics, at various K-12 grade levels, that were the statistically strongest predictors of college entry. There is an implicit model fitting these independent variables. MPS then set goals for each of these metrics and focused its administrators on reaching them. However, a mistake in (a) defining the variables, (b) measuring the variables, and/or (c) determining a cutoff for what is acceptable, could all be very costly, especially given the long time horizon of strategic planning in school systems.

2) It’s difficult to establish causation.

To tease out a causation, one needs a controlled trial. One would need to hold certain variables constant and manipulate others at a time. However, A/B testing might be difficult to do in the K-12 context due to ethical issues. The idea of public schools “experimenting on children” is not politically palatable. Charter schools or private educational tech companies might serve well in this regard.

3) Implementation requires educating the educators.

Data collection is one barrier, as is the analysis of and use of the collected data. Many teachers don’t believe in the need for data-driven decision making. One NYC public school teacher told me there is “too much data. Data is bull***t.” Others are overwhelmed by the amount of data they need to collect and track. One way this issue is being tackled is by including a data literacy component in state teaching license requirements.

If we can overcome some of the constraints, perhaps we can move toward a model of high autonomy of schools and classrooms to provide customized learning experiences for students. We might be able to use live, real-time data to measure progress of a student (minute-to-minute, during the lesson, and post-lesson), provide support, and deliver the right content in the right format to best educate that student.

Sources

Minneapolis Public Schools Strategic Plan

Interviews with current and former teachers

Afaf – thank you so much for bringing up this interesting discussion. I have always wondered why education is the slowest-moving sector in terms of technological development and I agree with the 3 points you mentioned above. I think given the lack of innovation and resistance to change in the current public education system, the innovation can only come from outside. Many tech ventures, especially on the west coast, are now working on such things but one important variable many of them fail to consider is – public-private partnership. Since education funding is concentrated in the public sector in US, it is extremely important for tech startups to demonstrate success and collaborate with public stakeholders.